“Is this test statistically significant?”

“Yes.”

That one word answer, “yes,” can be highly misleading. In Friday’s MarketingExperiments blog post, I discussed statistical significance and validity and why it is so important to getting the most from online testing. And while it is reassuring to know that a test is valid, what exactly does that “yes” answer mean? To find out, let’s take another look at why I’m alive, even though my mother never put me in a “fancy, new fangled car seat,” Helicobacter pylori, and the importance of understanding probability in marketing tests.

Probability: Something in a shade of gray

By human nature, we like to see things as black or white. Something either is or it isn’t. And that’s why the way science is reported in the modern media drives us so crazy. “Wait a minute, cheese is going to kill us? Five years ago scientists said cheese cured heart attacks.”

Why the disconnect?

In Jorge Cham’s cartoon “The Science News Cycle” (from “Piled Higher and Deeper”) I think he illustrates the problem perfectly. Scientists understand the inherent limitations on their work as they constantly strive to discover what is really going on in the world around us. But that doesn’t necessarily make a good headline, nor is it necessarily understood by the general population by the time the scientific discoveries reach them at the end of a complex game of “telephone.”

So I recently asked my sister-in-law, Dr. Danielle Dube, her perspective on this. She’s an Assistant Professor of Chemistry and Biochemistry at Bowdoin College and is currently researching the bacterial pathogen Helicobacter pylori. She said, “When I speak to my colleagues, we talk about our observations from laboratory experiments, what the results suggest, and possible conclusions to draw from the work. But when the research reaches a wider audience, those nuances tend to get lost as we try to communicate our efforts in a compelling way.”

Now, you might be testing on landing pages and email rather than Helicobacter pylori, but notice how cautiously Dr. Dube speaks about her research findings, understanding the inherent limitations in trying to discover new knowledge… “results suggest”… “possible conclusions”…

Jumping to conclusions

As I said at the beginning of this blog post, that “yes” answer to the statistical validity question may not be as definitive as it sounds. It simply means that you’ve reached a certain pre-established level of probability in a test.

At MECLABS, we normally aim for a 95% level of statistical confidence for our tests (p=0.05).

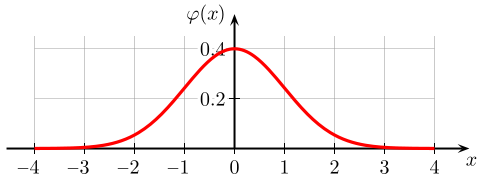

For those statistics fans out there, it means that there’s a 95% probability that the real (but unknown) answer falls within roughly two standard deviations of the sample mean – the point at the center of that really fat chunk of the normal distribution, or Gaussian (bell) curve – i.e., there is still a 5% chance that the real answer lies within one of the long tails.

But wait, there’s more…

What that p-value is really telling you is not the chance that your landing page treatment is –better than- the control (statistics, like justice, is blind), but only that it’s –different from– the control.

Again, for the statistic fans, you’ve simply found that there is sufficient evidence—per the standards you set—that the null hypothesis (i.e., that “the control and treatment are the same”) should be rejected.

“So, if you find that you must reject the hypothesis that the two are the same, you are basically accepting that they are ‘different’ from one another—different, in particular, in the characteristic on which you compared them; be it conversion rate, click-through rate or whatever,” said Bob Kemper, the Senior Director of Sciences here at MECLABS. “The value judgment of whether that difference is ‘better’ or ‘worse’ is one that you assign based upon your research or optimization goals,” he added.

On auto and marketing test safety

Which is why scientists report their findings in such a cautious fashion. To go back to the car seats for a moment, no scientist reported, “Have your kid in a car seat or they will die.” The scientists basically said, “That kid has a lot better chance of surviving a car crash if he’s in a car seat.” Or to hear it straight from the source…

Effectiveness of Child Safety Seats vs Seat Belts in Reducing Risk for Death in Children in Passenger Vehicle Crashes

Compared with seat belts, child restraints, when not seriously misused (e.g., unattached restraint, child restraint system harness not used, 2 children restrained with 1 seat belt) were associated with a 28% reduction in risk for death (relative risk, 0.72; 95% confidence interval, 0.54-0.97) in children aged 2 through 6 years after adjusting for seating position, vehicle type, model year, driver and passenger ages, and driver survival status. When including cases of serious misuse, the effectiveness estimate was slightly lower (21%) (relative risk, 0.79; 95% confidence interval, 0.59-1.05).

– Michael R. Elliott, PhD; Michael J. Kallan, MS; Dennis R. Durbin, MD, MSCE; Flaura K. Winston, MD, PhD

This is why you cannot rely on just one test to solve all of your problems. I’d love to be able to offer you “ten quick and easy secrets to overnight success”, but truly benefiting from online marketing tests requires that you:

- Create a culture of testing that leverages the testing-optimization cycle – Test. Improve. Test again. The more you test, the more you begin to discover valuable patterns in your customers’ behavior and preferences, and the more you can advance all of your marketing efforts over time.

- Embrace doubt – Even if you find something to be true today, will it change? If you are selling tours of the pyramids in Egypt and have tested and optimized your messages, how long do you think they will remain optimal? Might your potential customers significantly change in their perceptions and priorities over time? Might changes in the world economy or unfolding news about natural disasters or political unrest affect their motivations and concerns, and so their response to different messages?Our world and cultures, our perceptions and behaviors are changing at an unprecedented and yet ever-increasing rate—enabled by, and mirroring the pace of advances in technology and communication. Marketers who, in recognition of this, embrace a healthy doubt and continue to adapt using those same technologies, will succeed and outperform the rest.

- Be humble – It’s not often you hear a really, really smart guy trumpeting the horn of humility, but here’s what Bob Kemper told me. “My own observations have led me to believe that the greatest single predictor of failure among marketing executives is hubris—the belief that they have their industry and their customers all figured out, and already know what will work best,” Kemper said.What makes the skilled evidence-based marketer so enduringly successful is that she makes her decisions based on facts, not opinion—i.e., using data, not just intuition.

“This stems, I believe, from a healthy humility—the acceptance that she doesn’t (and can’t) have absolutely certainty, but the commitment to getting as close as she possibly can,” Kemper said. “So, what separates the skilled evidence-based marketer from the rest is the ability to turn sound data into sound decisions—ones that strike the optimum balance of risk and reward. This is achieved not just by using the right tools, but by using those tools right. Put the same chisel in the hands of a toddler and in those of a sculptor and observe what a difference this can make.”

Related resources

Optimization Summit 2011 – June 1 -3

Online Marketing Tests: How do you know you’re really learning anything?

FREE subscription to more than $10 million in marketing research

The Fundamentals of Online Testing online training and certification course

Cartoon comic attribution: “Piled Higher and Deeper” by Jorge Cham www.phdcomics.com

I love your blog and would love to guest post an educational article I have written pertaining to social media and the conflict in Egypt. If you are interested please email me for more information.

Thomas Morrison

twmorrison75@gmail.com

http://twitter.com/twmorrison75

Thanks for the positive comments Thomas.

We don’t have guest writers, per se, like other blogs do. We’ve found those “guest writers” tend to just be shopping the same story around everywhere.

But what we do every few weeks is ask a question to our audience and then publish the most helpful tips on our blog. The latest question is about Value Proposition and you can answer it here — http://linkd.in/ValueProp

“What makes the skilled evidence-based marketer so enduringly successful is that she makes her decisions based on facts, not opinion—i.e., using data, not just intuition.”

That is so correct. It’s hard to fail a business when you test and watch what those tests return.

Love the site! Keep up the hard work.