I am alive today. Even though my Mom never used one of those “fancy” car seats.

I am alive today. Even though my Mom never used one of those “fancy” car seats.

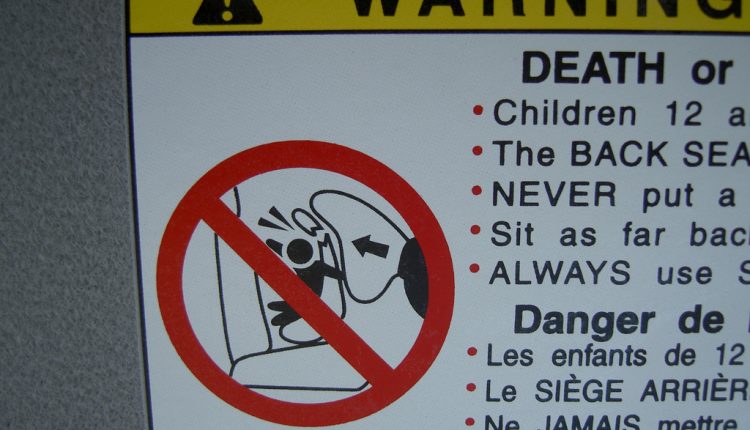

In today’s blog post, I’ll discuss what we can learn from debates about child safety technology to help you make sure you are really learning from your online testing. I’ll specifically cover some crucial but oft-misunderstood topics – statistical significance and validity.

In doing so, I hope to help you understand what you can really get from your online test results.

I’ve been thinking about the topic more lately, as I’ve been asked to speak about validity at our upcoming Optimization Summit (do you have a case study you’d like to present? We’ve just opened our call for speakers). While it’s become quite easy to simply plop two pages into Google Website Optimizer or Adobe Test&Target, it has become just as easy to jump to erroneous conclusions based on those tests that can give you unjustified confidence in what you think you’ve learned.

Don’t believe your own eyes

If you’re sandwiched between a set of parents and a child or two, you’ve likely heard something along the lines of, “Well, when you were a kid we never did X [some sort of safety-related endeavor] and you turned out fine.”

This is a very hard statement to argue with, because it contains all true facts. But let’s break it down for a moment as it relates to actually learning from online testing and car seats. Here are the two problems with your parents’ statement:

- They are basing their knowledge on just one observation or perhaps two, three, or four if you have siblings (in other words, they don’t have a statistically significant sample size – this can be difficult to determine, even with a testing platform)

- They are not exploring the alternative (for online marketing tests, this would mean conducting double-blind, randomized tests to compare different approaches – the easy part with testing platforms)

I believe this is one major area where the general public has a hard time understanding scientific research, because you must essentially trust a bunch of smarty-pants scientists more than your own common sense. Or as Chico Marx put it in Duck Soup, “Well, who you gonna believe, me or your own eyes?”

Strength in numbers

Don’t be so sure about your own eyes. Because experimental scientists who’ve ”been around the block” know how dangerous it is to draw general conclusions using special cases—in this case, the experience of one household.

That’s why they base their knowledge on significantly more observations. In one example study of car seat safety, “Complete interview data were obtained on 7,151 children in 6,591 crashes representing an estimated 120,646 children in 116,503 crashes in the study population.”

What the scientists are trying to do is study a large enough sample size to ensure with a high probability that the knowledge they are gaining in their subset of all possible real-world instances truly reflects the most likely outcome in all real-world instances.

In other words, they are fighting random chance. When you have only the small amount of observations you can see with your own eyes, there is a much greater probability the you’ve simply observed a quirk like, say, flipping a quarter and getting four “tails” in a row. Your observations don’t negate the fact that the quarter has another side.

Marketing lesson

“What scientists do is learn a set of specialized principles, consisting of procedures, math and logic, then apply and adapt them to whatever they study,” said Bob Kemper, the Senior Director of Sciences here at MECLABS. “The procedures make sure they get good data. The math makes sure they get the right answer(s). The logic makes sure they turn the right answers into the right actions.”

As Bob says, a sound methodology is very important. But you’ve got to get the math right, too.

That’s why it’s not enough to “see” which tests of landing pages or emails perform best in a test, you must ensure that you have achieved statistical significance. In other words, don’t just trust your “horse sense.” Make sure your test is statistically valid before basing your decision on it.

And that takes far more than just looking for a set of green bars on a testing platform, due to these possible validity threats:

- History Effects: The effect on a test variable by an extraneous variable associated with the passage of time.

- Instrumentation Effects: The effect on the test variable, caused by a variable external to an experiment, which is associated with a change in the measurement instrument.

- Selection Effects: The effect on a test variable, by an extraneous variable associated with different types of subjects not being evenly distributed between experimental treatments.

- Sampling Distortion Effects: The effect on the test outcome caused by failing to collect a sufficient number of observations.

I don’t mean to dissuade you from optimization testing. It is certainly a powerful way to dramatically improve results in a short period of time.

But, I do want you to be able to figure out if you are gaining a true understanding of what is really going on with your audience.

As Albert Einstein said, “A little knowledge is a dangerous thing. So is a lot.” Basing vital business decisions on a few casual observations, or on a heap of data you can’t make sense of, is just as risky as taking a random guess.

In fact, it’s more so because, while you know full-well when you’re making a “SWAG” based purely on intuition, collecting ”data”—even a tiny bit—can embolden you to take much bigger risks, even when they are unwarranted.

“Scientists use mathematical principles developed and refined over more than 200 years to ‘grow up’ from a child-like ‘Yes/No’ picture of things—like ‘Car Seats=worthless, waste of money’ or ‘Car Seats=the final answer to child safety’—to a more mature (and practical) ‘Better / By-how-much’ one that weighs the risks and the costs,” Kemper said.

Which leads to our next blog post – a topic that goes hand in hand with statistical significance and validity – probability. Please join us Monday right here on the MarketingExperiments blog.

Related resources

FREE subscription to more than $10 million in marketing research

The Fundamentals of Online Testing online training and certification course

Determining if a Data Sample is Statistically Valid

Optimization Testing Tested: Validity Threats Beyond Sample Size

Photo attribution: Dan4th