This week at the San Francisco leg of MarketingSherpa’s B2B Summit 2011, Brian Carroll, Executive Director of Applied Research, MECLABS, and Nicolette Dease, Program Manager, MECLABS Leads Group, provided tactical training on optimizing lead generation.

Part of this presentation was a case study on finding the most efficient list source based on a test that looked at several different lead sources.

The objective of the test was to determine if higher-cost/higher-quality data can drive down overall cost-per-lead, and the primary research question was, “Which campaign data source will drive the most efficient value?”

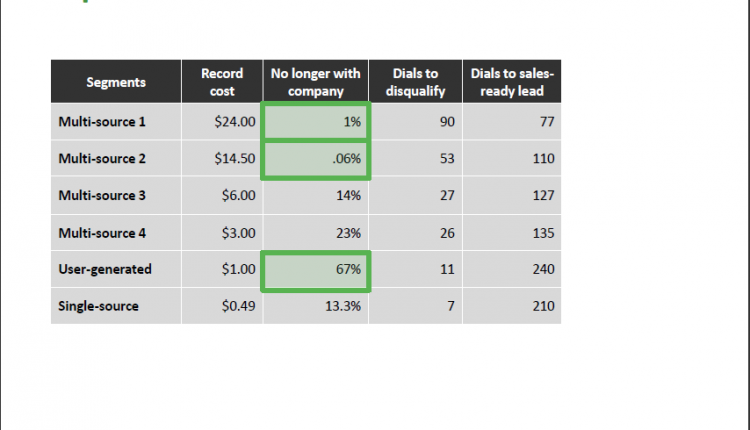

The test design looked at six list segments with 300 accounts and 80 hours of calling per segment. Let’s first look at how much each lead costs for discovery:

- Multi-source 1, validated by phone based on role – record cost $24

- Multi-source 2, validated by phone based on title – record cost $14.50

- Multi-source 3, validated by phone – record cost $6

- Multi-source 4, validated by email – record cost $3

- User-generated, validated by business cards – record cost $1

- Single-source, no validation – record cost $0.49

Results of the test

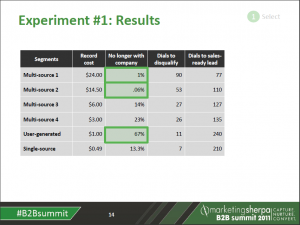

After discovery, every lead (even leads not validated by phone in discovery) in each segment needed the human touch – a phone call to validate the lead. This table looks at the validation calls for each lead segment:

–

–

As you can see – the highlighted green boxes illustrate a dramatic difference in list quality – the segments with the higher up-front record costs required many more calls to disqualify and fewer calls to find sales-ready leads. This tells us the higher cost lists are also higher-quality lists.

Nicolette says, “Not only are you paying for the data, but you are also paying someone to find out that lead is no longer with the company.”

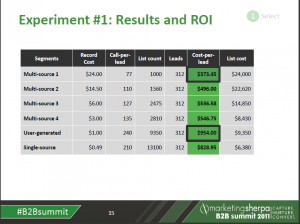

So let’s take a look at how the numbers add up when you consider the cost of the human touch. This table looks at the cost-per-lead for each segment:

–

–

Looking at these results, Nicolette points out that the role-based discovery (called “multi-source 1 segment” in the bulleted list of lead lists tested above) actually saved money over every other segment, and was dramatically less expensive than the user-generated segment once you figure in the human resources that must be devoted to that list.

List efficiency

“Three to five percent of people change at least one thing on their business card each year,” Brian said. Once you start adding it up, if the list is based on data a few years old, up to half of your list could have incorrect data. With that in mind, those “cheap” lists can become really expensive once you factor in all the time spent validating the leads and uncovering the leads that are stale in cheaper lists.

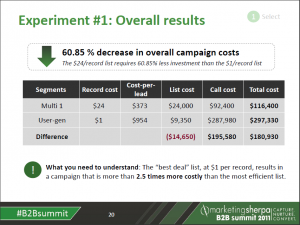

This table provides the big picture overall results of the test:

–

–

Nicolette provides the three key takeaways from this cost-per-lead test:

“Cheap” data in terms of record cost is actually expensive in the long run

- The less you spend on data, the more you will spend on teleprospecting

- View list acquisition as strategic, not simply transactional

These are Nicolette’s learnings. I’d challenge you to start your own lead optimization and testing process to discover what works best for your company.

Related Resources

Have a minute? Brian Carroll reveals how sales teams pay dearly for cheap data

B2B Lead Optimization: Why cheap leads can be so expensive

B2B Lead Generation: Four experts’ advice on generating higher-level leads

Lead Generation: A closer look at a B2B company’s cost-per-lead and prospect generation

–