One of the most discussed sends in email marketing is the welcome email — and for good reason. This first email often acts as the first point of direct contact a customer has with your brand, so the pressure to make it as perfect as possible is there. That’s where testing comes into play.

In her session at MarketingSherpa Email Summit 2015, Diana Primeau, Director of Member Services, CNET, spoke on the importance of testing her brand’s welcome and nurturing series as well as how the brand utilized segmentation to create a more personalized content series. Described by Erin Hogg, Reporter, MarketingSherpa, as a “mentor and teacher here at MarketingSherpa,” Diana has spoken at several brand events and has an impressive amount of experience when it comes to testing.

[Note: MarketingSherpa is the sister company of MarketingExperiments]

The typical CNET user is someone who is interested in technology and wants to research certain technology to reach a buying decision. According to Diana, CNET is the largest tech site in the world, and the site sees over 100 million unique visitors every month. CNET also has a large newsletter portfolio, which includes 23 editorial newsletters that are hand-curated by the brand’s editors, two large marketing newsletters and three deal space newsletters.

“I feel pretty fortunate. I get to play in a pretty large sandbox,” she joked.

One of the most dynamic tests Diana presented during her Summit 2015 presentation tested CNET’s welcome series against five different treatments. This test was conducted with an A/B split design, and the changes to the treatments were made according to three factors:

- Content

- Subject lines

- Advertisements

Watch the video excerpt below to learn how these drastic changes compared to Diana’s original hypothesis of including as much information for the user as possible.

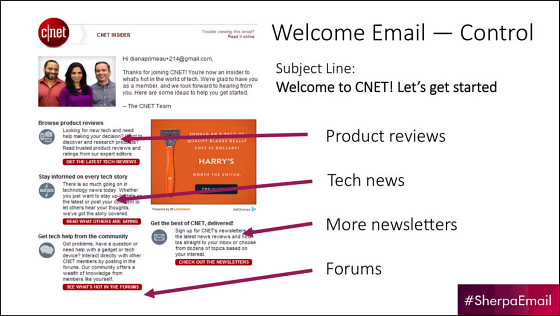

Control

“We had this crazy hypothesis, and it was ‘Tell the user everything,'” Diana said. “We wanted them to know everything about our brand, and this email actually performed very well.”

This initial email included the subject line that CNET always used in its welcome emails, an advertisement and four sections:

- Product reviews

- Tech news

- More newsletters

- Forums

However, though the Control performed well, there was nothing to benchmark against this first send. Together with a summer intern, Diana determined the three areas of this send she wanted to test and developed the corresponding treatments.

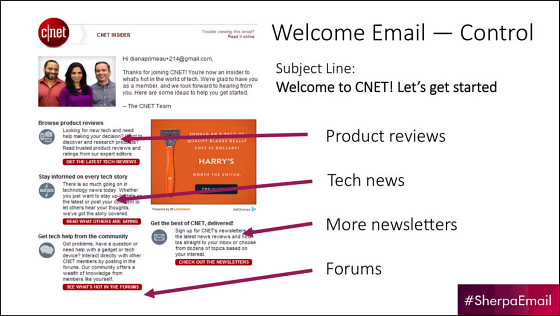

Version 2 and Version 3

Version 2 of the welcome email condensed the Control’s long copy into a simple introductory message and a simple call-to-action, which directed users to the homepage. This version also added an image of a CNET editor.

Version 3 featured the same content from Version 2, but arranged it in a slightly different way. Also, the picture of the editor was replaced with an ad unit.

“Advertising and ad units in our newsletters are very much an important part of our strategy,” Diana explained. “They’re a very nice revenue stream for us, and they help us find some of the initiatives.”

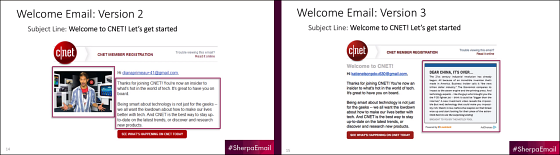

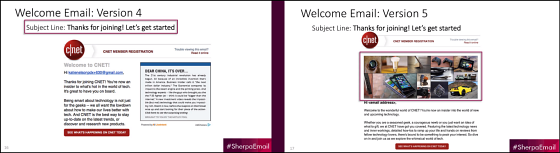

Version 4 and Version 5

Version 4 was essentially the same as Version 3 with one small change — the subject line.

Prior to this test, the brand had been using the subject line, “Welcome to CNET! Let’s get started.” This subject line had been tested many different times and had proven to be victorious. For this treatment, the subject line was subtly changed to “Thanks for joining! Let’s get started.”

Version 5 used the same subject line as Version 4 and also included an image, which was positioned differently. Instead of featuring an editor, this image was a collage of the different types of things users would expect to see on the site.

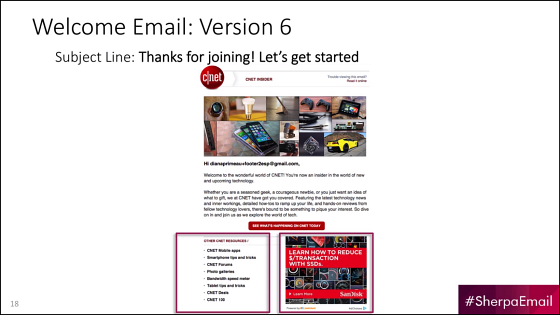

Version 6

This last treatment, Version 6, utilized all the winning changes from the previous four treatments but added in an ad unit and a block of content. For this content block, only high-traffic pieces were selected.

Results

Through all of this testing, Diana and her team learned that simple worked best. Each test produced an increase in both opens and clickthrough. This welcome email started with a 32% open rate and ended on a 46% open rate. Likewise, the clickthrough rate for these tests increases from 12% to 21%.

“Our hypothesis was wrong. For our brand, less is better,” Diana said. “I really want to stress that — it’s for our brand.”

“Every brand is different, so I really encourage you, if you’re going to do this, that you do test and make sure that what works is good for your brand,” she concluded.

You can follow Kayla Cobb, Reporter, MECLABS Institute, on Twitter at @itskaylacobb.

You might also like

Want to gain access to more markeing insights? Reserve your seat for MarketingSherpa Summit 2016 today — February 24-26, at the Bellagio in Las Vegas

Gain access to 14 full sessions from MarketingSherpa Email Summit 2015

Content Optimization: Reduce redundancy, improve relevance and increase engagement [MarketingSherpa video]

Win-back Campaigns and List Cleansing: How CNET re-engaged 8% of its email list [MarketingSherpa video]

Email Marketing: CNET increases engagement by cutting nearly half of newsletter portfolio [MarketingSherpa case study]

Thanks for sharing this info. We all just need to continue testing and learning. What A/B testing tools would you recommend?

Hey David,

Thank you for your comment. There are several great (and free) marketing tools out there that can help with A/B testing and marketing strategy in general. I would recommend looking through the resources mentioned in these articles:

Online Testing: 3 benefits of using an online testing process (plus 3 free tools)

Free Marketing Tools: 11 worksheets, spreadsheets, and calculators to help make your next optimization project a success

Thanks for reading, and let me know if you have any other questions.

Best,

Kayla