I’ve got a research question. Now what do I do with it?

A few weeks ago, Daniel Burstein wrote a blog about writing research questions. In that blog post, we emphasized the importance of asking “which” rather than “what” questions because a “which” question is clearly testable.

You might ask, “Which page format results in the most lead submissions?” or “Which price point generates the most revenue?” Both questions are clearly stated and include two key pieces of information:

- An independent variable you are going to test

- The dependent variable you will use to measure your results

To know if something is better, first you must know if it is different

With the research question on paper, we can easily create a hypothesis. For the former question: “All page formats will result in the same number of lead submissions.” This type of hypothesis is so famous in research circles that it has a name: “The Null Hypothesis.”

In general terms, the null hypothesis states that varying the independent variable will result in no change to the dependent variable.

In other words, you’re testing to see if changing the page (the independent variable) will change the number of leads (the dependent variable). After all, if there is no change, one cannot be any better than the other.

Why not “The new layout will result in the most lead submissions,” you ask. Because there is no concrete reason to know that there will be a change. Besides, if you already knew the effect of A on B, why would you need to test it?

Control vs. Treatment(s)

In most cases, there will be an existing page that all new versions will be compared to. This page is termed the “Control,” and all new pages are dubbed “Treatments” to guide comparisons later.

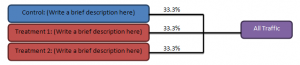

The next step in testing your research question is to decide on the most appropriate test structure. This will depend on the number of variations you will be testing, and on the amount of traffic your site receives. At MECLABS, our research analysts do this visually using a small flowchart to represent the flow of traffic to the control and treatment pages.

Take your latest research question and write it down. Below it, write out the following until you have listed all the variations to be tested.

At the right hand side of the page, write “All Traffic.” At this point, you need to determine if your traffic should be evenly split between all the tests or if you will pull only a small portion of traffic into the treatment pages and maintain most of the flow to the existing Control page.

At MECLABS, our analysts use the Test Protocol document to determine how many site visits are required to achieve valid results given a set of treatments and typical conversion rates on the existing page. This process is covered in our Online Testing Course.

Split tests

Draw lines between “All Traffic” and the pages to the left showing the split and mark each with a percentage of traffic to be sent in that path (See below). This design is called a split test. It is very important that traffic is randomly split between the treatments and control. In a high traffic site, the percentage sent to the control can be higher than what is sent to the treatments, as long as you will easily meet the required minimum sample size.

Multi-factorial tests

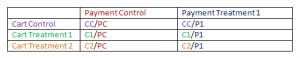

The split test design works for tests of only one step, but sometimes we need to test more than one step in a process. We have two independent variables that we will manipulate separately. For example, if your research question is, “Which checkout process generates the most revenue?” you might want to test several variations of cart layout and payment page layout at the same time.

If you were to test [Cart and Payment Treatment 1] against [Cart and Payment Treatment 2], your results might tell you that [CT and PT 1] produced 15% more revenue than [CT and PT 2], but you would never learn that Cart Treatment 1 paired with Payment Treatment 2 would have yielded an even higher lift!

Essentially, you have two research questions: “Which cart design will generate the most revenue?” and “Which payment design will generate the most revenue?” This means you have two independent variables and one dependent variable.

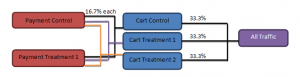

To test multi-step processes, researchers use a research design called a factorial test. Each variation in each independent variable is tested together so that all combinations are tested. A typical factorial design is represented below.

Because the traffic is sent evenly to each pairing, the factorial research design accounts for the natural dependency between steps 1 and 2. If a viewer does not like Cart Treatment 1, they will not proceed to the Payment step, but since you have also tested other combinations of Cart and Payment, you can assume the effect is balanced out.

A factorial test requires a lot more traffic than a split test to achieve validity, but it also gathers a lot more insight. From the results of a factorial test, you can infer not only the winning combination but also which treatment of each step was most successful. This subtle distinction comes in handy if you then wanted to test further refinements of the process.

There are some situations that cause problems with research design. It may not always make sense to pair all the possible combinations together, in which case a factorial design is not possible and a split test should be used instead.

Don’t make the mistake of forming all but one or two pairs of the factorial design. An asymmetrical design does not neutralize the dependency of the second step on the first. In other words, if every factor isn’t matched with every possible other factor, you could overlook a potentially big lift.

Traffic volume is crucial for factorial tests

One common reason some marketers don’t run multi-factorial tests is a low-traffic page. For example, with only 3,000 hits a month, a 7% historical conversion rate, and six treatment pairs (2 payment designs x 3 cart designs), it could take as much as three years to validate the factorial design shown above!

When faced with an unreasonable completion time, you have a few choices to make. You can test fewer treatments, resulting in quicker accumulation of hits on each treatment, or you can test one step of the checkout process at a time.

You also have the option to test pairs of pages in a split test, losing the additional insights given by the factorial design. All of those options will reduce the time needed to validate the test.

Some marketers try to learn about which treatment works best through sequential tests. Essentially, one page was live, or one email was sent, and then the page was changed, or another email was sent. One treatment is left online for a set period, followed by the next treatment, and so forth. This is usually because there was no test design to begin with, and marketers are comparing results after the fact.

This could also be because marketers do have a test design but are unable to split traffic. After all, if you can only direct traffic to a single page design at a time, you can only test pages sequentially. (However, with the wide availability of both free and paid optimization tools, this situation has become quite rare.)

Sequential tests are extremely prone to history effects, where an outside event or phenomenon affects the viewers’ behaviors on the site from one moment in time to another (see our Online Testing Course for more information on History Effects).

For example, an email sent out to the mailing list will increase traffic to whatever homepage treatment is currently online, distorting the actual effect of the design changes. This effect is usually noticeable as a sudden rise on an analytics traffic or conversion chart. Although it is not an optimal research design, this type of study can distinguish between a control and a treatment page. Results should only be interpreted if the possibility of history effect has been considered and found insignificant.

Related Resources:

Marketing Optimization: You can’t find the true answer without the right question

Artificial Optimization: Why at least 40% of marketers shouldn’t test

Marketing Optimization: How to determine the proper sample size